Startseite

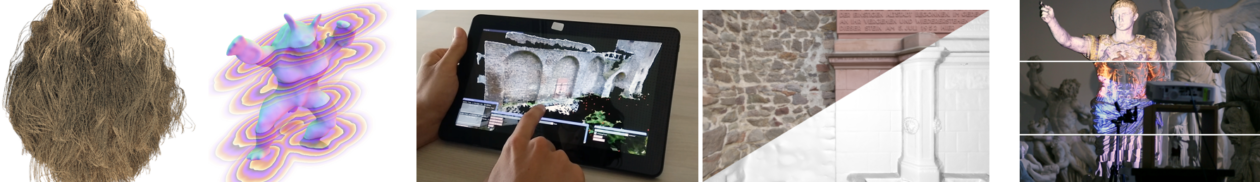

For more than 30 years the Chair of Visual Computing researches methods for the generation, processing and analysis of images and 3D-models. This includes disciplines such as computer graphics, visualization, geometry processing, virtual reality, computer vision, or 3d reconstruction.

UPCOMING EVENTS:

24th/25th of April 2025

In 1992, the Chair of Computer Graphics (Lehrstuhl Graphische Datenverarbeitung) at FAU was founded by Hans-Peter Seidel and Thomas Ertl. Today, FAU has four independent groups closely working together on all topics of Visual Computing: the groups of Bernhard Egger, Tobias Günther, Marc Stamminger and Tim Weyrich form the research cluster Visual Computing Erlangen (VCE).

So, in 2025, we would like to take the opportunity to celebrate 33 years of Computer Graphics, and the formation of Visual Computing in Erlangen, with our friends and alumni. On April 24 and 25, we will host a scientific symposium and a social event, as well as talks about the history of Computer Graphics in Erlangen and on industrial applications of our alumni. There will be lab tours and demos of recent work, directly followed by the opportunity to visit FAU´s Day of Computer Science, featuring two further first-class invited talks from the field of Visual Computing: Jan Kautz (NVIDIA) and Matthias Nießner (TUM), both FAU alumni, too.

29th of September – 1st of October 2025

Redoutensaal

Theaterplatz 1

91054 Erlangen

The International Symposium on Vision, Modeling, and Visualization (VMV) will take place for the 30th time in 2025 in Erlangen, Germany. This conference is well-established as Germany’s premier scientific meeting that covers the full spectrum of visual computing. VMV offers researchers the opportunity to discuss a wide range of different topics within an open, international, and interdisciplinary environment.

Authors are encouraged to submit their original research results, practice and experience reports, or novel applications.